Daniela Pérez Guerrero, Jesús Manuel Antúnez Domínguez, Aurélie Vigne, Daniel Midtvedt, Wylie Ahmed, Lisa D. Muiznieks, Giovanni Volpe, Caroline Beck Adiels

Microchemical Journal 225, 117685 (2026)

arXiv: 2507.07632

DOI: 10.1016/j.microc.2026.117685

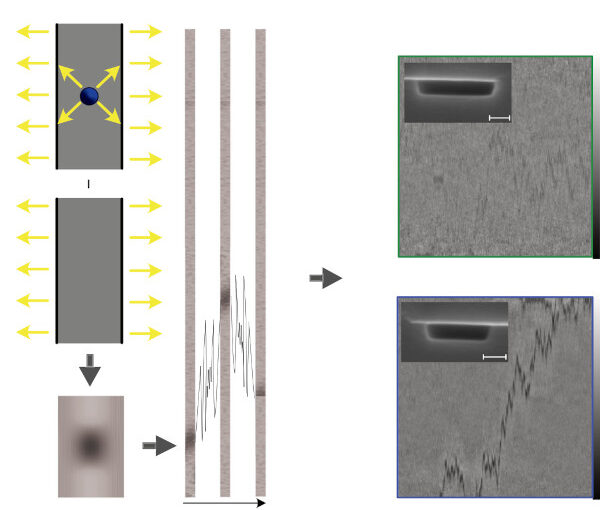

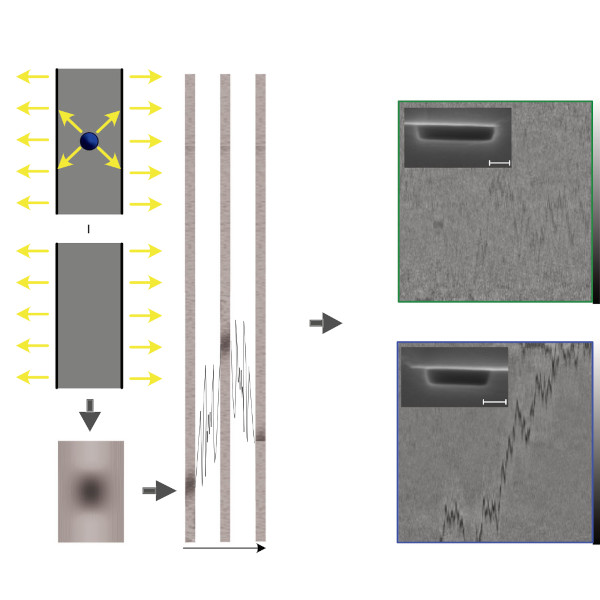

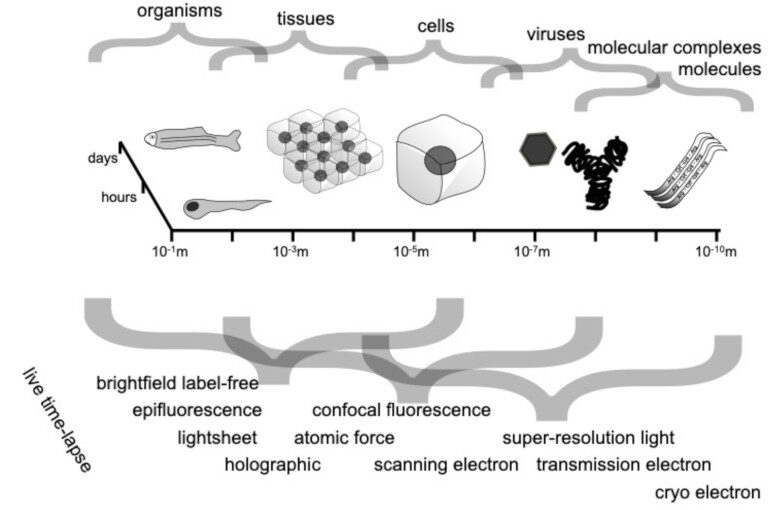

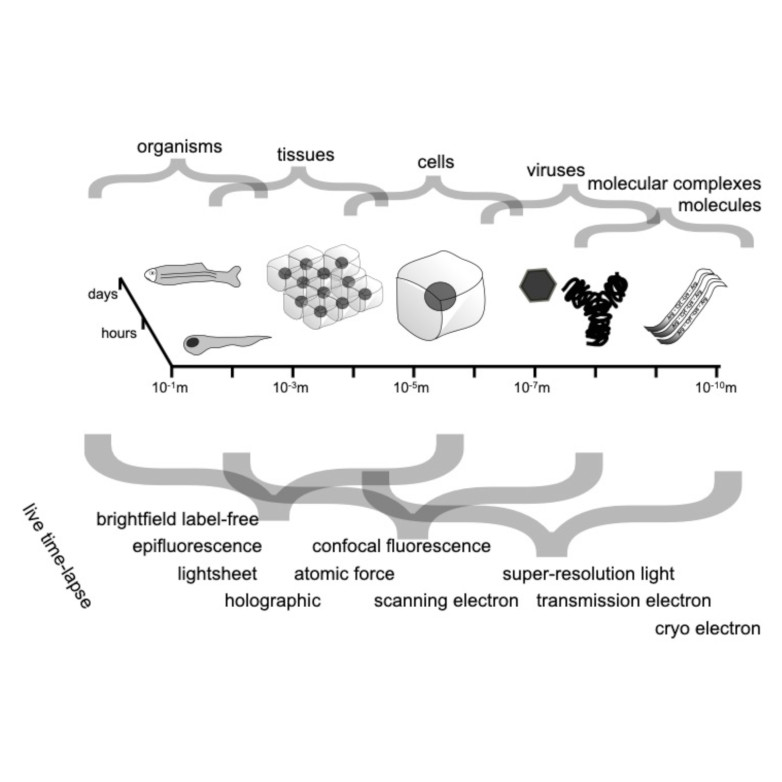

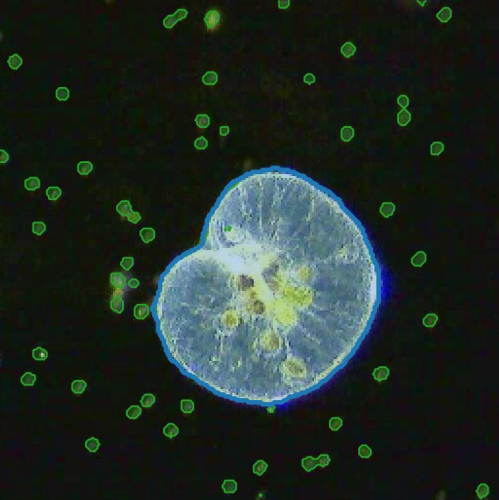

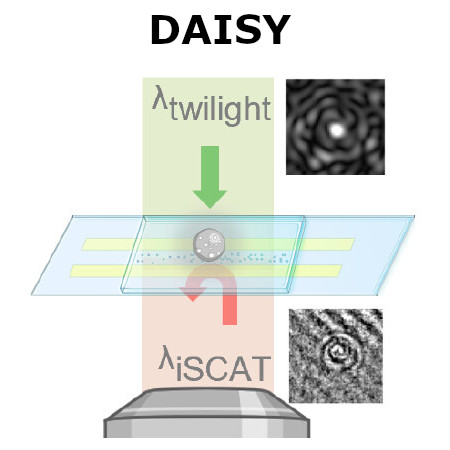

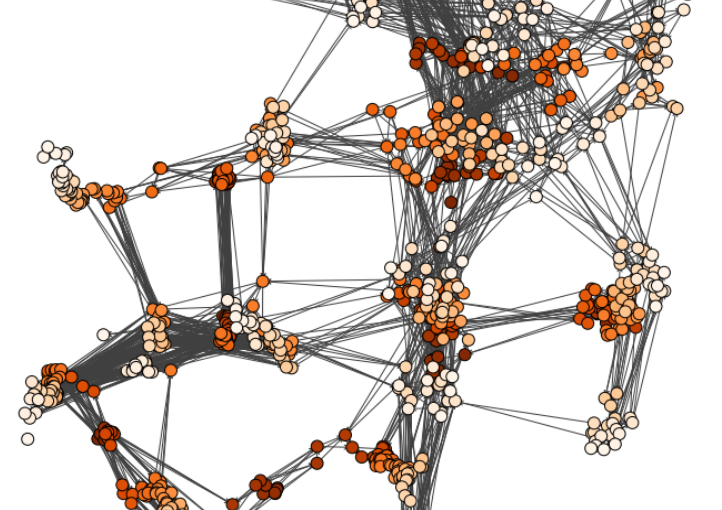

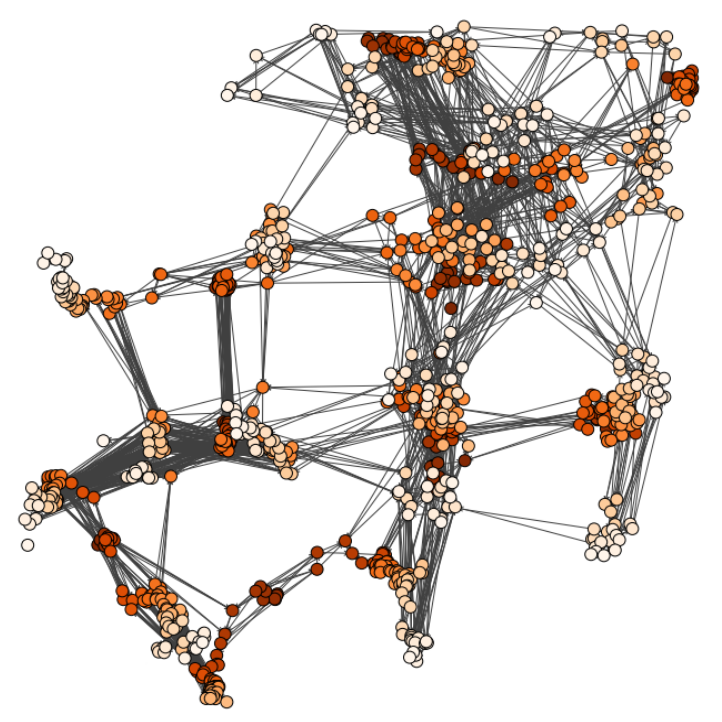

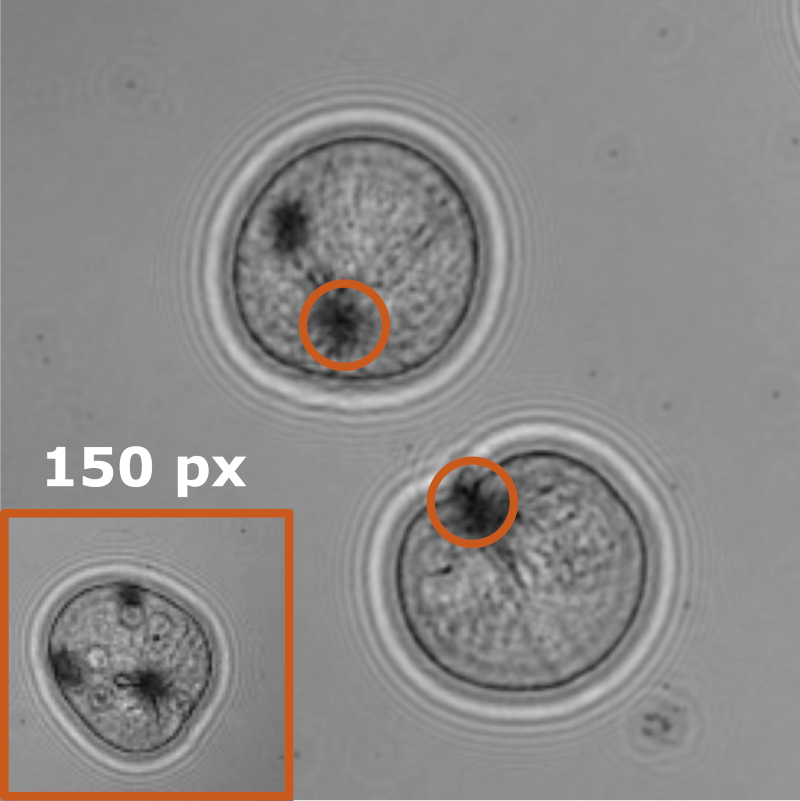

Bacterial biofilms play crucial roles across diverse contexts, from public health risks to beneficial applications in bioremediation, biodegradation, and wastewater treatment. However, tools that enable high-resolution, dynamic analysis of their responses to environmental cues and collective cellular behaviors remain limited. Here, we present a droplet-based microfluidic platform that combines continuous in situ microscopy with subsequent unsupervised deep learning for quantitative analysis of biofilm development. In our setup, Bacillus subtilis cells are encapsulated in monodisperse aqueous microdroplets containing Lysogeny Broth, suspended in an oil phase and immobilized within microfabricated traps, providing continuous optical access throughout biofilm formation at the water–oil interface. The platform supports both fluorescence and bright-field imaging, enabling high-throughput, time-resolved monitoring of thousands of droplets under controlled conditions. To extract quantitative information from these large datasets, we developed an automated analysis pipeline based on a Variational Autoencoder (VAE) trained directly on microscopy images from our experiments. This unsupervised model enables segmentation and latent-space representation of bacterial structures without manual annotation or synthetic training data. Post-segmentation size thresholding enables classification of bacterial aggregates and larger biofilm-like clusters, including quantification of biofilm porosity, thereby supporting detailed morphological and temporal analyses across droplets and conditions. By integrating droplet microfluidics with unsupervised deep learning, our platform provides a scalable, robust, and rapid approach for high-throughput quantitative studies of biofilm behavior. It resolves complex structural biofilm patterns, bypasses the need for manual annotation, and opens new opportunities to probe environmental determinants of biofilm formation. Departing from earlier methods, our framework fuses biological training data with unsupervised models to quantify microbial community dynamics across scales, offering a generalizable platform for future high-resolution microbiology.